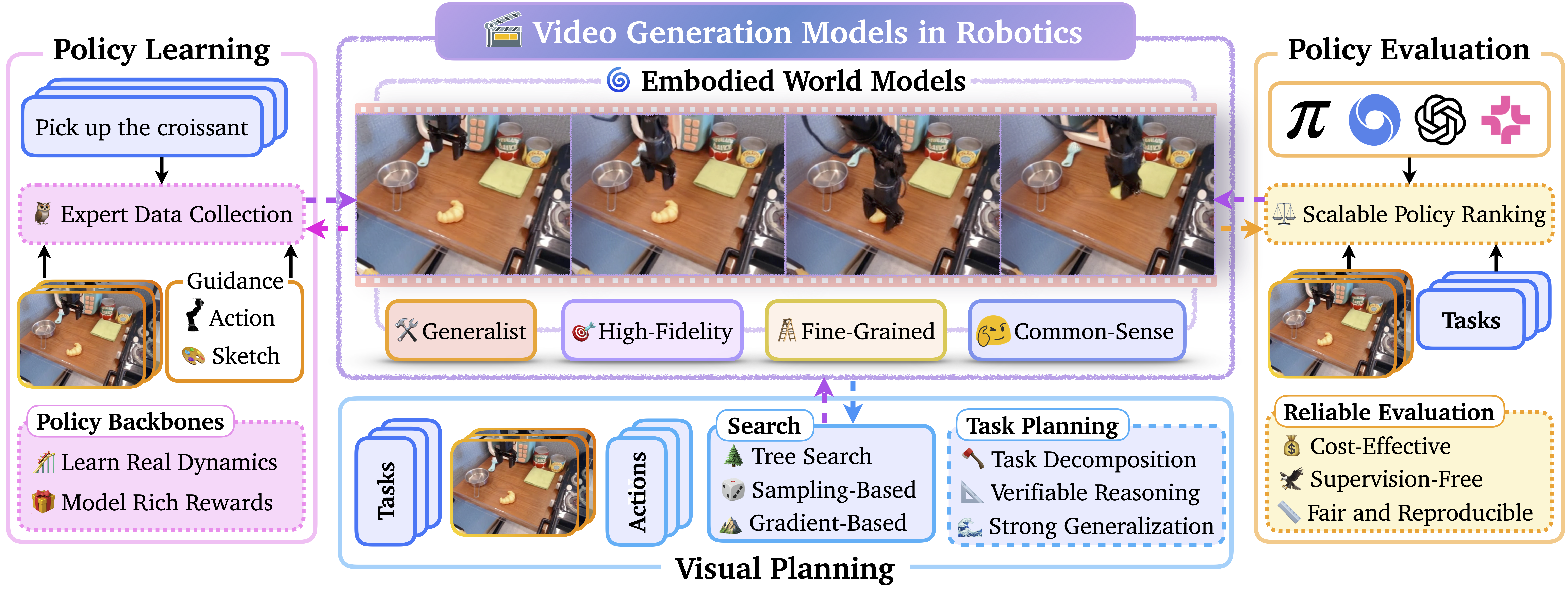

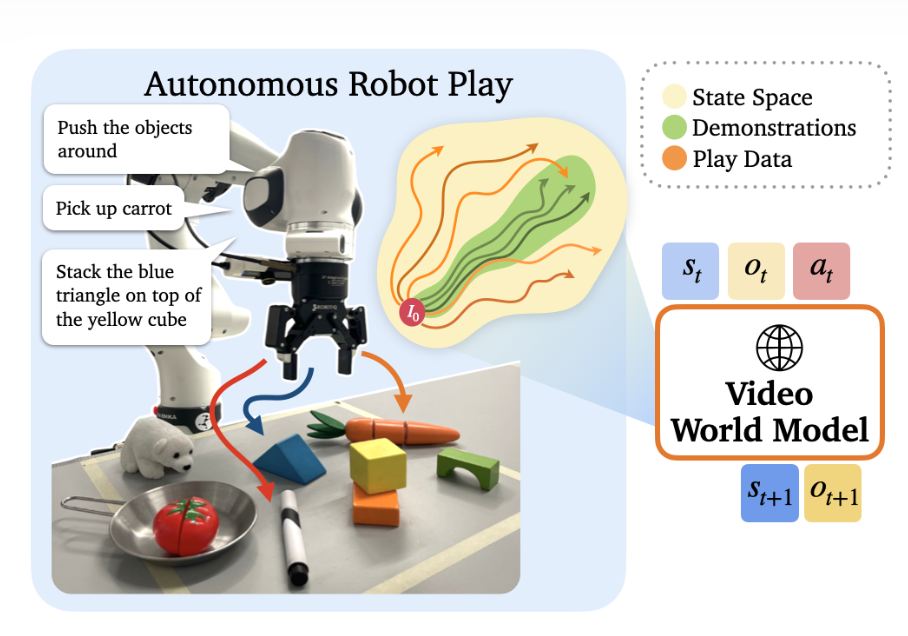

PlayWorld: Learning Robot World Models from Autonomous Play

TL;DR: We introduce PlayWorld, a system for training video-based robot world models using autonomous self-play rather than human demonstrations, generating high-quality physically consistent predictions for contact-rich interactions that enable reinforcement learning in simulation with 65% improvements in real-world policy success rates.